Introducing AKI.IO: The European AI API for Model Inference

Table of Contents

AKI.IO API Features

AKI.IO gives teams token-based access to curated AI models on European infrastructure, without the operational burden of running their own GPU stack. The platform is built for production use cases such as chat, image generation, and image editing, and supports migration paths for teams already using OpenAI- or Anthropic-style APIs. Use standard HTTPS requests, keep application logic in your existing stack, and move inference workloads onto EU-hosted infrastructure.

AKI.IO API Features

- Drop-In Replacement: OpenAI- and Anthropic-compatible integration paths

- Fast: Asynchronous, multi-process HPC GPU API servers

- Efficient: Token-based billing with self-service API key setup

- Secure & Compliant:

- Easy Integration: Official client interfaces for JavaScript, Python, and PHP, plus HTTP/JSON integration for other stacks

- Sovereign: EU-hosted inference on infrastructure in certified European data centers

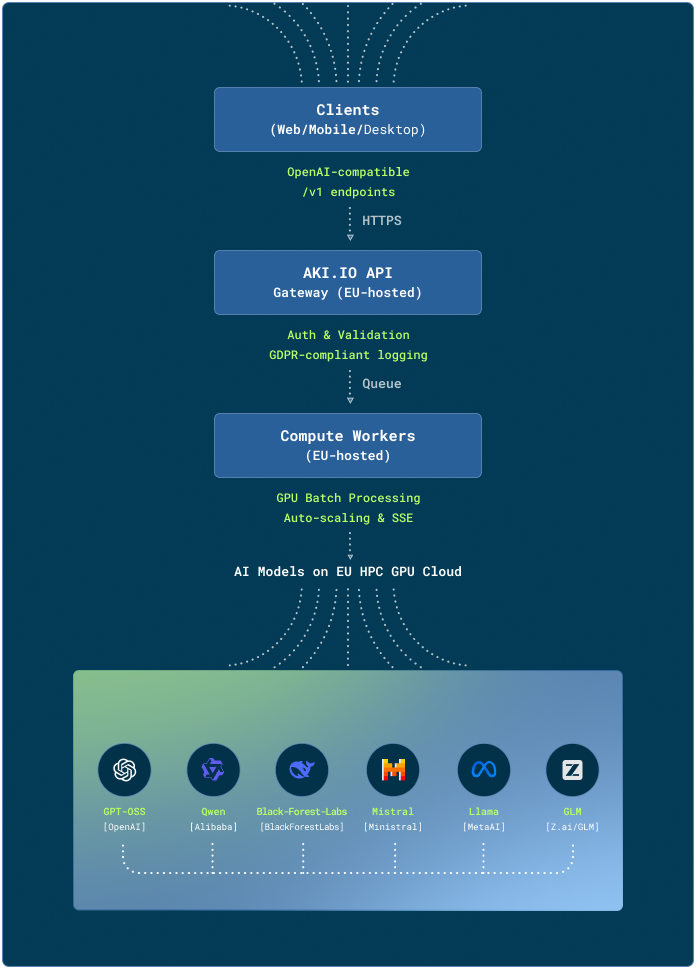

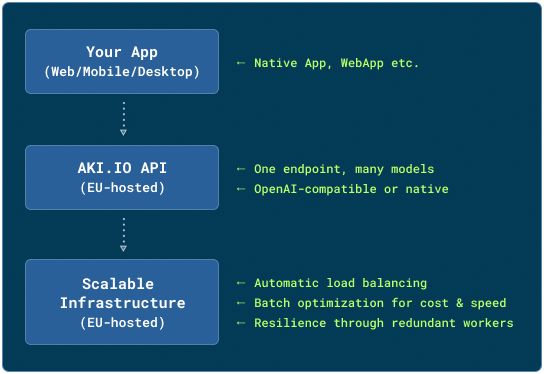

Overview: AKI.IO API Architecture

AKI.IO routes AI requests through a secure job queue to distributed GPU infrastructure hosted in Europe. The platform abstracts model operations behind a documented API layer so teams can evaluate, integrate, and scale model inference without managing the underlying compute environment themselves.

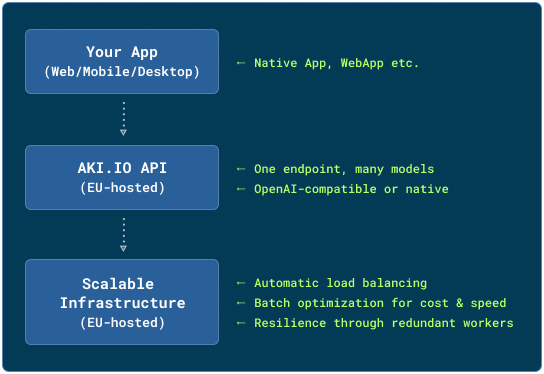

The Concept: Simple, Practical, Scalable

AKI.IO abstracts the complexity of model hosting and GPU operations behind documented APIs and client interfaces. Instead of operating your own inference stack, your application sends standard requests to curated model endpoints and receives structured responses for production workflows.

Why the AKI.IO API Matters for Builders

One infrastructure layer for multiple model types

AKI.IO exposes curated LLM and image workflows through documented interfaces, so teams can evaluate and deploy models without self-hosting.

Migration paths for existing integrations

AKI.IO is positioned for teams already working with OpenAI- and Anthropic-style APIs, which lowers migration effort for existing applications and agent stacks

Streaming & Real-Time Feedback

Clients receive continuous status updates or token streams via Server-Sent Events (SSE), ideal for chat interfaces, progress indicators, or interactive generation.

Robust by Design

- Automatic retry logic for network interruptions

- Job queuing with prioritization for time-sensitive requests

- Graceful degradation: During traffic spikes, non-critical jobs are buffered without compromising overall availability

Type Safety & Validation Out-of-the-Box

Every endpoint clearly defines expected inputs and delivered outputs. Invalid parameters are caught early, with helpful error messages that accelerate integration.

Built-In Media Handling

Send Base64 or a URL: The API handles encoding, format conversion, and size optimization automatically.

Integration: Simple by Design

The AKI.IO API was built from the ground up for Developer Experience. Three principles guide integration:

| Principle | What It Means for You | None |

|---|---|---|

| OpenAI and Anthropic compatibility | For compatibility-based integrations, use https://aki.io/v1 for OpenAI- and Anthropic-style access, https://aki.io/openai/v1 for OpenAI-only integrations, and https://aki.io/anthropic/v1 for Anthropic-only integrations. | None |

| Flexible Client Support | Python, JavaScript, cURL, Java, mobile SDKs: The API speaks "HTTP/JSON"—any language that supports REST is welcome. | None |

| Blocking and streaming responses | Quick response? Use synchronous mode. Long jobs? Start async and fetch results via polling or SSE. | None |

Example: Chat in 3 Lines (Python)

from aki_io import Aki

aki = Aki("llama-3.1-8b-chat", "YOUR_API_KEY")

print(aki.do_api_request({"messages": [{"role": "user", "content": "Hello!"}]})["choices"][0]["message"]["content"])Example: Image Generation via cURL

curl https://aki.io/v1/images/generations \

-H "Authorization: Bearer YOUR_KEY" \

-d '{"prompt": "A futuristic Berlin skyline", "model": "flux-1-dev"}'Example: Streaming in a Web App (JavaScript)

// Just fetch() + SSE parser – done

const response = await fetch("https://aki.io/v1/chat/completions", {

method: "POST",

headers: { "Authorization": "Bearer YOUR_KEY", "Content-Type": "application/json" },

body: JSON.stringify({ model: "llama-3.1-8b", messages: [...], stream: true })

});

// Tokens arrive live – like OpenAI, but European.💡 Tip: The Client Library Docs provide copy-paste snippets for most common languages, including error handling and streaming templates:

More articles

Agentic AI in Europe: What Teams Should Get Right Early

Agentic AI is moving beyond chat into systems that can read files, edit code, call tools, browse the web, run terminal commands, and complete work across multiple steps.

The AKI.IO Launch Manifesto

Let’s be honest: Europe did not win the race for general artificial intelligence. The United States and China are competing for dominance over frontier models — and with them, technological power.

How Berlin´s CCT Built an Knowledge Assistant with Open WebUI

Moritz Gehrke is a member of Company Consulting Team e.V., Berlin’s student consulting organization. At CCT, he works on tech projects that connect modern AI tools with real organizational needs, with a focus on usable, privacy-aware systems in practice. A practical example of how a student consulting organization turned internal documents into a searchable AI knowledge base in just a few steps, using Open WebUI and the AKI.IO API on European infrastructure.