Agentic AI in Europe: What Teams Should Get Right Early

Table of Contents

For European teams, that changes the evaluation setup early. As soon as internal code, documents, credentials, or workflows enter the loop, model access becomes part of the trust boundary. That makes infrastructure, permissions, and interface stability more important than they are in a basic chat application.

This article is for teams evaluating agent frameworks, coding agents, or orchestration layers on real internal workflows. The practical question is not only which model or tool looks most impressive in a demo. It is how to build a setup that stays portable, controlled, and easier to govern as experimentation becomes more serious.

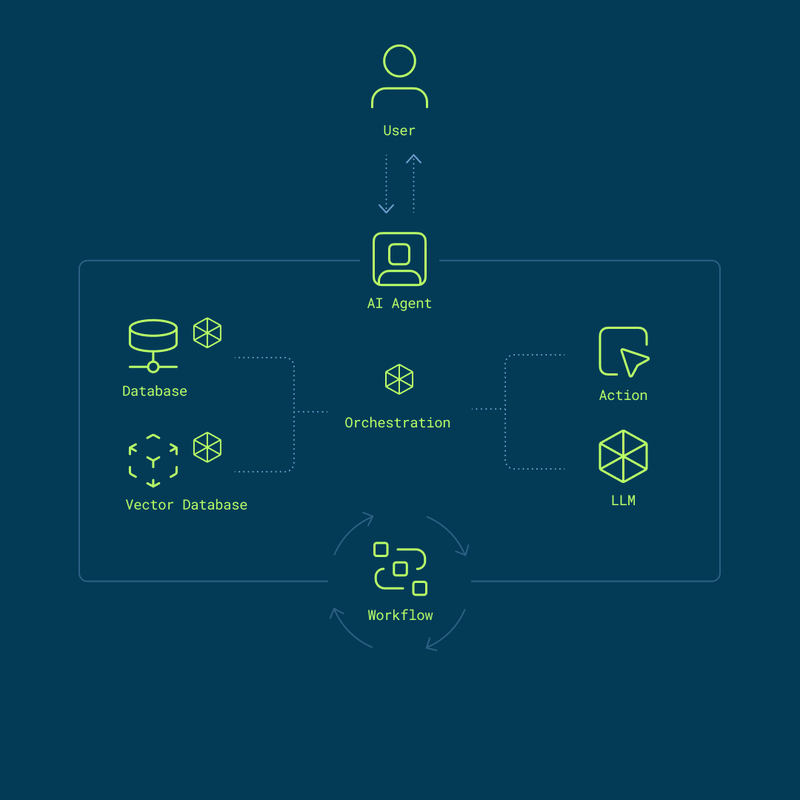

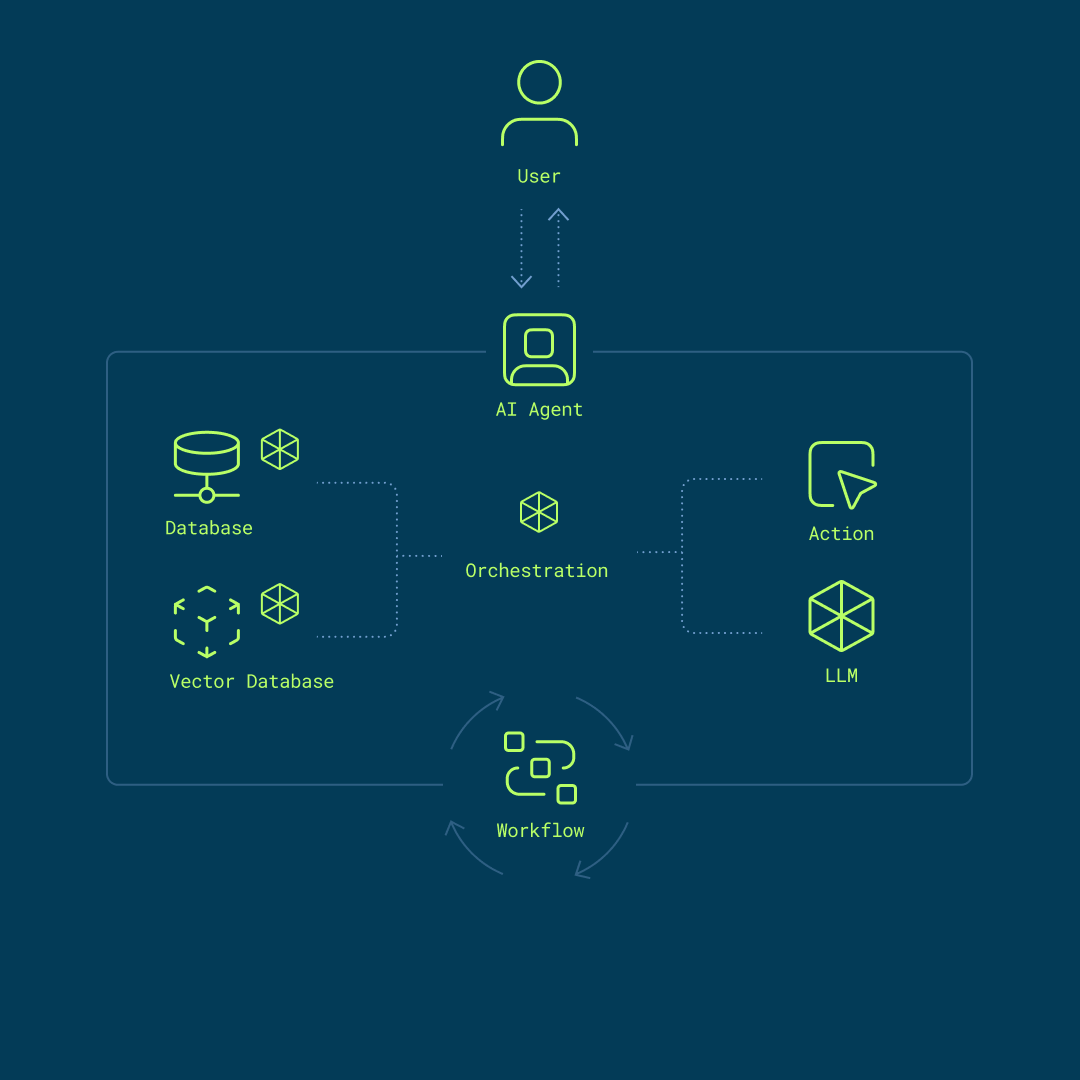

Agents and orchestrators solve different problems

It helps to think of the current market as two related but distinct layers.

One layer consists of AI-powered agents that help users execute work more directly. Tools such as OpenCode, Claude Code, OpenClaw, Hermes Agent, Nanobot, RooCode or others are often used this way in coding workflows across terminal, desktop, or IDE environments.

The other layer consists of orchestration frameworks that structure, coordinate, and control more complex workflows. LangGraph and n8n are often used in this role for multi-step systems with state, retries, approvals, memory, or role separation.

Some tools sit somewhere between those layers. Multica, for example, can be understood as sitting between direct execution and a more structured software-agent environment. Broader assistant-style systems such as Paperclip may also extend beyond a terminal-native coding agent by combining persistent tooling with wider action surfaces.

The exact category matters less than the architectural takeaway. The top layer will continue to evolve quickly. The infrastructure underneath should be more stable.

The market is expanding, not converging

The current framework landscape is not settling around one default choice. It is broadening into categories.

Some tools are gaining traction because they help developers act quickly in hands-on environments. Others are becoming more important because they help teams build structured workflows with clearer control over execution.

That fragmentation is not a problem. But it does mean teams should avoid tying their entire setup too tightly to one framework. The workflow layer will keep moving fast. The model access layer should stay more portable and easier to control.

This is where a stable API layer matters. OpenAI- and Anthropic-compatible access gives teams a familiar interface while allowing them to test different agents, orchestrators, and models without redesigning the stack each time.

Deployment discipline matters more than the demo

A lot of discussion around agentic AI still focuses on prompting, benchmarks, or model choice. In practice, deployment and access control matter just as much.

Most of these systems need deliberate setup by the responsible developer or engineering team. The key question is not only which model performs best. It is also what the system is allowed to access, which tools it can call, which credentials it uses, and where human approval is still required.

That applies across the stack.

A coding agent with shell access can save time, but it can also make unwanted changes if its permissions are too broad. A persistent assistant can be useful across channels and sessions, but only if those integrations are scoped carefully. An orchestrated workflow may look clean in a diagram, but once it touches repositories, documents, inboxes, or APIs, access design becomes part of the core system.

Serious teams therefore do not deploy these tools casually. They isolate runtimes, limit permissions, separate environments, and keep clear ownership around credentials and approvals. Agentic systems are powerful because they can act. That is exactly why they require more deliberate control.

Why infrastructure becomes an early design decision

With a basic chat application, infrastructure choices can often be postponed. Agentic systems change that.

As soon as an AI system can inspect repositories, read documents, execute commands, or interact with external systems, the inference endpoint becomes part of the trust boundary. The same is true when an orchestrator coordinates several steps across sensitive workflows.

At that point, model access is no longer just a backend detail. It becomes part of the operating model.

This matters early for European teams because many projects are first evaluated on realistic internal workflows, not toy examples. The moment internal code, customer documents, support material, or business processes enter the loop, questions around hosting, data handling, and access control show up quickly.

An EU-hosted inference layer can improve that evaluation setup. It allows teams to test agents and orchestration frameworks with model access hosted in Europe. That does not replace the need for careful security design, but it does create clearer data-handling boundaries and a stronger privacy posture during evaluation.

The more coherent approach is straightforward: controlled runtime, controlled model access, and infrastructure that matches European operational requirements from the beginning.

Why interface stability matters

The more fragmented the agent ecosystem becomes, the more important interface stability becomes.

A developer may use OpenCode for coding workflows today. Another team may test OpenClaw-style assistants for broader automation. Product teams may build structured workflows with LangGraph or CrewAI. The framework can change. The interface to inference should not need to change every time.

That is why a stable, familiar API layer is strategically useful. It reduces migration effort, preserves optionality at the framework layer, and makes evaluation easier for teams that want to avoid default dependence on non-European model endpoints.

For teams evaluating agentic systems, that matters because infrastructure choices usually last longer than framework preferences.

How AKI.IO fits into this setup

AKI.IO provides EU-hosted inference for open-source and open-weight models through an OpenAI-compatible API.

The practical goal is simple: give teams a way to evaluate, build, and operate AI applications on European infrastructure without taking on the overhead of self-hosting or locking themselves into one model provider.

For agentic systems, this matters because the upper layers of the stack are changing quickly. Teams want room to test different frameworks and workflows. They do not want to rebuild the model layer every time they do so.

That makes a stable API layer useful across several scenarios: coding agents, assistant-style systems, and more structured orchestrated workflows. The benefit is not abstract. It is a more practical path to testing modern AI systems with clearer infrastructure and data-handling boundaries.

Usage control matters in agentic systems

One of the more practical realities of agentic AI is that token consumption can become difficult to predict.

These systems do not behave like single-turn chat interfaces. They plan, retry, summarize, branch, call tools, and sometimes loop in ways that are not obvious at first. During testing, that can lead to unnecessary spend if usage controls are too loose.

That is why cost and access control should be built into the setup early.

With AKI.IO, token usage can be capped per API key. That helps reduce the risk of uncontrolled token burn during autonomous loops, failed logic, or overly permissive experiments.

A professional company account can also use multiple API keys across teams, environments, projects, or workflows. Dedicated endpoints can be selected per API key, so a given agent or orchestration workflow only has access to the models it actually needs.

In practice, that creates a cleaner evaluation setup. One API key can be used for a staging coding agent. Another can be reserved for an internal workflow built with LangGraph. Another can be used for a sandboxed OpenClaw or OpenHands experiment. Usage stays separated, access stays clearer, and control becomes easier to maintain.

The practical takeaway

The important shift is not one framework alone. It is that the market is separating into tools that help AI systems execute work more directly and frameworks that help teams structure and control that work.

For European teams, the practical sequence is straightforward:

- Choose the workflow layer that fits the use case.

- Isolate the runtime and permissions deliberately.

- Keep model access portable through an EU-hosted API layer.

That is the operating logic AKI.IO is built to support: a more practical way to test and run modern AI systems on European infrastructure with OpenAI-compatible access, clearer data-handling boundaries, and stronger usage control.

More articles

Introducing AKI.IO: The European AI API for Model Inference

A European AI API for teams that want EU-hosted inference with curated open-weight and open-source models such as Qwen, MiniMax, GPT-OSS, Llama, Apertus, Ministral, Flux.2, and more. Integrate through OpenAI- and Anthropic-compatible interfaces without self-hosting GPU infrastructure.

The AKI.IO Launch Manifesto

Let’s be honest: Europe did not win the race for general artificial intelligence. The United States and China are competing for dominance over frontier models — and with them, technological power.

How Berlin´s CCT Built an Knowledge Assistant with Open WebUI

Moritz Gehrke is a member of Company Consulting Team e.V., Berlin’s student consulting organization. At CCT, he works on tech projects that connect modern AI tools with real organizational needs, with a focus on usable, privacy-aware systems in practice. A practical example of how a student consulting organization turned internal documents into a searchable AI knowledge base in just a few steps, using Open WebUI and the AKI.IO API on European infrastructure.