The European AI API

Access leading open-source AI models via API on secure EU infrastructure. A drop-in replacement for OpenAI, built for GDPR compliance, operational control, and flexible model choice — so you can test, deploy, and scale your AI applications faster.

Simple

AI model API designed to work out of the box.

Compliant

GDPR-compliant, built on open-source principles.

Predictable

Token-based, pure pay-per-use. No upfront investment.

Flexible

Optimized for rapid AI model testing, deployment and scaling.

Access Leading Open-Source Models Instantly

One API.

Multiple AI Models.

Your business is unique. Your AI models should be too. Whether customer service and marketing, creation and edutainment, coding, science and R&D - each AI workflow has different requirements and priorities.

Our European AI API connects you to curated leading open-source AI models for every business domain. Benchmarked and optimized for production-grade latency, predictable cost, and reliability. Safely hosted in the European Union.

Switch models anytime, compare performance, or adapt your stack as your needs evolve. No vendor lock-in, proprietary constraints or hidden dependencies — your workloads run efficiently across leading AI models with the best price-performance ratio in Europe.

Infrastructure is hosted in European data centers. ISO 27001, TÜVIT TSI, DIN EN 50600 and other certifications apply to the respective data center operators.

AI Infrastructure

for Teams Who Ship

High-performance

Low latency and high throughput on optimized HPC GPU clouds

GDPR-compliant

Sovereign infrastructure and tech stack, data never leaves the EU

Developer-friendly

Well-documented interface with an active developer community

Self-service setup

Token-based billing

Code-agnostic API

Rapid model updates

The European

Model Layer for

Agentic AI Systems

Agentic systems such as OpenClaw, OpenCode or Claude Code are moving from demos into production. Teams now use frameworks and coding agents to read documents, call tools, write code, run terminal commands, and automate multi-step tasks.

This creates a new infrastructure requirement. Once AI systems can access internal tools, email, tickets, or codebases, model hosting becomes a compliance, control, and vendor-risk decision.

AKI.IO provides the European model layer for these workflows. Use EU-hosted inference models through an OpenAI- and Anthropic-compatible API, switch across curated models, and keep sensitive workloads on European infrastructure.

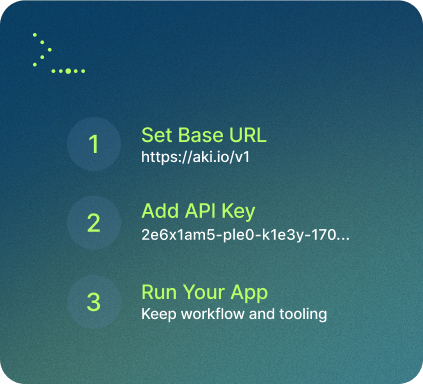

Easy Migration: Drop-in Replacement for

OpenAI & Anthropic

Move existing AI features to EU-hosted inference without a full rebuild. AKI.IO is built for teams that already use OpenAI- and Anthropic-style APIs. Integration starts with changing the endpoint and API key, while your app logic, agent framework, and orchestration layer remain largely unchanged. A practical path to European AI infrastructure without a full rewrite.

One API Key

for Agentic AI Workflows

High-Performance AI Infrastructure

AKI API routes AI requests through a secure job queue to distributed GPU clusters. No special network setup is required. We rely on industry standards, and all components are open source. Connect your apps seamlessly with state-of-the-art AI models running on high performance infrastructure and scale workloads across any location. Developers turn any PyTorch or TensorFlow model into a secure, production-ready API in minutes.

from aki_io import Aki

aki = Aki('llama3_chat', 'YOUR_API_KEY')

params = {

"chat_context": [{"role": "user", "content": "Hello!"}],

}

result = aki.do_api_request(params)

print(result)

const { Aki, doAPIRequest } = require('../aki_io');

const params = {

chat_context: [{role: 'user', content: 'Hello!'}],

};

doAPIRequest(

'llama3_chat',

YOUR_API_KEY,

params,

(result) => {

console.log(result);

}

);curl -X POST -H 'Content-Type: application/json' -d \

'{"key":"YOUR_API_KEY","chat_context":[{"role":"user","content":"Hello!"}],"wait_for_result":true}' \

https://aki.io/api/call/llama3_chatPricing: Pay as You Go,

Without Fixed Costs

Pay monthly for what you use. No hidden fees, no upfront commitments. Scale from prototype to production with predictable, token-based pricing.

Large Language Models

| Model | Input (1M tokens) | Output (1M tokens) |

|---|---|---|

| 1.25 € | 2.00 € | |

| 0.15 € | 0.55 € | |

| 0.25 € | 0.65 € | |

| 0.15 € | 0.15 € | |

| 0.65 € | 0.65 € | |

| 0.25 € | 1.20 € | |

| 0.20 € | 0.20 € | |

| 0.25 € | 0.50 € |

Image Generation

| Model | Output (per image, 1 Mpx) | |

|---|---|---|

| 0.005 € | ||

| 0.075 € | ||

| 0.12 € | ||

| 0.009 € |

All prices are exclusive of VAT and other applicable taxes.

Purpose-Built

HPC GPU Clusters

for AI Workloads

Frequently Asked Questions

The most important information at a glance. Contact us if you have any questions.